The market is still making the same analytical mistake with AI infrastructure: it keeps trying to force every “AI cloud” company int a single category. That instinct is understandable because the software market trained investors to look for one eventual winning model, one dominant interface, one best platform, one clean margin structure. But AI cloud does not appear to be developing that way. It is not converging toward a unified model. It is splitting into at least two distinct businesses.

One business is built around control. The other is built around convenience.

The control business sells bare-metal access, Kubernetes level orchestration, reserved GPU capacity, and direct exposure to the newest systems with as little abstraction as possible. The customer in that market is not looking for a prettier interface or a more opinionated cloud wrapper. It is usually a hyperscaler, a frontier lab, a sovereign actor, or a very sophisticated enterprise buyer with its own infrastructure teams and its own preferences for how workloads should be run. What these customers want is not cloud experience. They want keys to the machine.

The convenience business is something different. It is software abstracted infrastructure designed for customers who do not want to manage the entire stack themselves. Here the provider creates value by making deployment easier, reducing operational friction, adding security and governance, simplifying billing, and wrapping raw infrastructure in tools that make it accessible to enterprises and smaller builders. This part of the market looks more familiar because it resembles cloud and SaaS logic. Customers are buying time-to-use and risk reduction rather than maximum control.

That distinction matters because these are not merely two maturity stages of the same product. They may be two separate profit pools with different buyers, different moats, and different valuation frameworks. The market still tends to interpret them through one lens, usually a SaaS lens, and that is where a lot of current confusion comes from.

The easiest way to see the problem is to look at how investors ask the wrong question. They keep asking which neocloud has the better software platform. But that question only matters if all AI demand is eventually mediated through software abstraction. The most important recent AI infrastructure deals suggest that this is not true, at least not at the top end of the market. Some of the most strategic customers are showing a preference for priority access, direct control, and time-to-compute rather than convenience. They are buying usable compute capacity, not necessarily a thick software layer.

This is why the common critique that a company lacks a software moat can be misleading. That criticism assumes software is the universal source of value in AI cloud. It may be highly relevant in the convenience segment, but it may be much less relevant in the control segment. If the end customer is a hyperscaler or a frontier lab, the real moat may instead be GPU allocation, power access, site readiness, deployment certainty, and the ability to hand over a live cluster with minimal abstraction tax. In that world, software still exists, but it often lives in the support layer (provisioning, observability, fleet management, security, governance) rather than serving as the primary reason the customer buys.

That is the deeper reason why the recent debate around companies like Nebius and IREN has often generated more heat than light. A deal that validates Nebius’s model can simultaneously validate IREN’s strategic direction without proving parity between the two businesses. Those are different claims. The existence of large reserved GPU and baremetal contracts tells the market something important: external AI factories are real, third-party compute is strategically valuable, and sophisticated buyers are willing to purchase capacity without demanding a full SaaS-style wrapper. That is a category level validation for the control segment. But it does not mean every company in that segment is equally positioned. Parity still depends on customer relationships, GPU access, financing capacity, site control, deployment speed, and execution credibility.

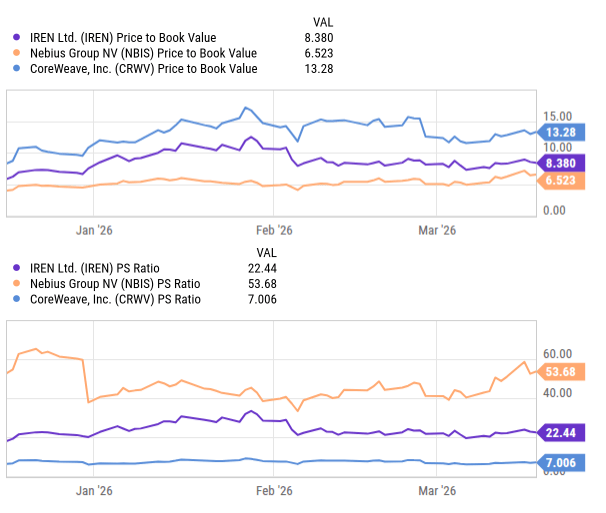

The valuation spread between IREN, Nebius, and CoreWeave is itself evidence that the market has not settled on a single lens for AI cloud. Price-to-sales and price-to-book tell conflicting stories because investors are implicitly rewarding different things in each name: contracted demand, software narrative, owned infrastructure, and capital structure. That is what a splitting category looks like. If AI cloud were converging into one standard model, the multiples would likely be cleaner and the relative framing more stable. Instead, the market is already treating these companies as different businesses, even if the language used to describe them still lumps them together.

The key point is that bifurcation should not be read as a bearish sign for the category. It may be the oposite. A market only sustains multiple durable operating models when demand is large enough and heterogeneous enough to support them. In AI cloud, hyperscalers and frontier labs appear to be pulling the market toward control (bare metal, reserved GPUs, minimal abstraction) while enterprises and smaller builders are pulling it toward convenience (managed infrastructure, software tooling, and easier deployment). That does not necessarily imply a zero-sum fight between the two. It may imply the market is expanding fast enough to support both.

Leave a comment